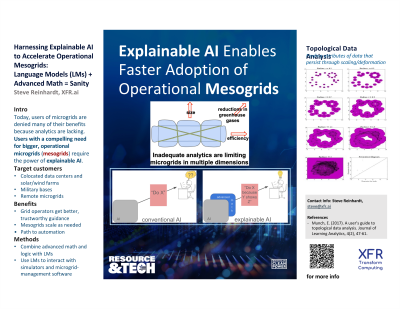

Harnessing Explainable AI to Accelerate Operational Mesogrids: Advanced Math + LLMs = Sanity

Tuesday, October 1, 2024

5:00 PM - 6:00 PM MST

Location: Regency D

Steve Reinhardt, MS (he/him/his)

CEO

Transform Computing, Inc.

Eagan, Minnesota

Poster Presenter(s)

Poster Description: To forestall the worst impacts of climate change, the US energy grid needs to incorporate quickly massive renewable-generation capacity mediated by massive storage capacity, from a fleet of devices whose composition itself is quickly changing as new technologies become commercially relevant. The growth in the number of devices and their bidirectionality requires radically new techniques to deliver the necessary reliability, resilience, flexibility, and cost-effectiveness. Generative AI has delivered stunning capabilities but not in operational contexts, largely because it has not gained the trust and confidence of the human supervisors in such contexts. Transform Computing, Inc. (XFR) is developing explainable AI (XAI) that can gain the trust of humans by providing objective rationales for conclusions, even in scientific/engineering domains like the energy grid (in contrast to domains dependent purely on natural language). XFR is initially targeting operational use cases like a) avoiding and mitigating electromagnetic transients (EMTs) in the grid-connection of renewable inverter-based resources (IBRs) lacking rotating mass and b) detecting and avoiding emergent issues in mesogrids, similar to the distributed-denial-of-service (DDoS) attacks that occur in computer networks. This presentation will describe these use cases and the potential strengths and weaknesses of XAI solutions for these problems.